Bose AI Integration in Ultra Open Earbuds

Overview

Collaborated with the Bose Design thinking team in order to imagine a future with AI virtual assistant integration into Bose’s wearables.

Timeline

January 2024 - May 2024

Collaborators

Ashley Kim (me!)

Eli Marcus

Aditi Mehndiratta

Avalon Edwards

My Role

UX Design - Interaction Design, User Researcher

Problem

🎵 Envisioning the Future of AI-Integrated All-Day Earbuds

To motivate BOSE users into transitioning to using Ultra Open Earbuds, our team explored the current landscape of AI technology, looking for opportunities for innovation.

The Solution

🌟 Inspiring Users Through Innovative Storytelling

Users weren’t very excited about AI-integrated until we changed our project approach and interview strategy. Instead of designing around current gripes and limitations of AI, we created storyboards presenting theoretical real life scenarios that featured how our ideated functions could impact the user’s life, in addition to a complete film that brought these storyboarded features to life.

As a result, we received unanimous and enthusiastic feedback from both users and the Bose design thinking team, and our ideas are expected to roll out onto future Bose products in 2025-2026.

Initial Approach

💭 Rethinking AI Integrated Wearables

We started with a 14-week structured project plan to identify pain points and inform our design directions:

🔎 Competitive Analysis: We analyzed AI startups like Humane AI Pin and Rabbit R1, identifying how AI wearables are currently failing to meet user expectations.

🤖 Field Research: Using ChatGPT-4+, we simulated real-world AI interactions and identified key issues and limitations with AI as it is today.

☝️ User Interviews: We conducted 15 in-depth interviews, trying to meet users where they’re at.

Market Research

🔎 Identifying the current scope of AI Technology

Early 2024 was a significant year for AI startups like Humane Inc. (Humane AI Pin) and Teenage Engineering (Rabbit R1), as they released the first AI integrated wearable products that received massive media attention.

However, our research quickly revealed a major point in today’s AI wearable technology: In its current state, AI wearable often failed with real time responsiveness, limited use cases, and seamless integration into routines.

Field Research

🤖 AI in the world

Our team conducted hands-on tests using the ChatGPT-4+ model to simulate real-world usage by walking around campus and interacting with the AI through our phone’s microphone.

While we found that ChatGPT excelled in active conversations, improving as users provided more context, we also identified key limitations:

Lack of website access, particularly Google Maps, public transit sites, and business-specific webpages.

It frequently provided incorrect information with confidence, a well-documented issue since ChatGPT’s debut in 2023.

User Interviews

☝️ Addressing Concerns Over AI technology

To understand how our target demographic interacts with AI, particularly voice-assisted formats, our team conducted 15 in-depth interviews. We focused on how users engaged with ChatGPT-4’s voice-operated function, identifying usage patterns and preferences for AI integrated wearables.

However, while users found AI technology useful in certain contexts, they did not find its features compelling enough to justify purchasing AI-assisted devices.

“My main issue is that voice-operated systems often don’t understand what I am saying and so it is more of a hassle to use it than a time-saver. If it worked like it was supposed to, I would be more inclined to incorporate a voice-operated system into my daily life.”

Main Insight

💡 Time to get Ambitious

Overall, we condensed our team’s findings from interviews into 3 parts:

AI Devices don’t meet users’ expectations

People view AI as a work tool, not for everyday life

People view AI chatbots and voice assistants as tools for their professional and academic lives, not their personal or social.

AI assistants need external app integration.

Most use cases people are excited about in their conceived interactions with an AI assistant require it to connect with their external apps—ChatGPT can’t.

During our research phase, it quickly became clear that users didn’t find value in AI-integrated headwear in its current state. To generate more interest and refine our direction, our team took a different approach—ideating and presenting specific everyday scenarios where our product could shine.

User Interview Part 2

✨ Shifting Perspectives

Our team shifted our interview approach, moving away from questions about current day products to scenarios where users could envision our AI wearable in their daily lives. By presenting tangible use cases, we transformed initial indifference into excitement, receiving universally positive responses about our proposed product and more valuable feedback.

Personas

Link to full in-depth Persona’s here

Storyboarding

📖 Creating Functions by Telling Stories

Using our developed personas and synthesized interview data, we created storyboards to illustrate our ideated AI features in real-world settings, refining these concepts with further testing and feedback.

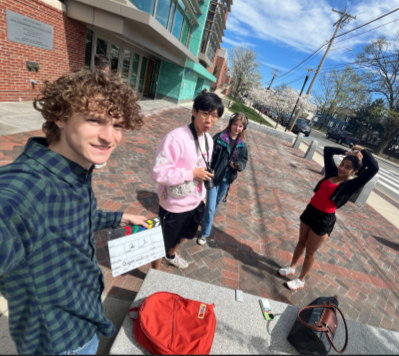

Filming

📽️ Bringing Our Vision to Life

After successfully testing our storyboards for clarity and effectiveness, our team set out to translate these concepts into a compelling demonstration film. I took on the role of filming and editing, ensuring the clarity of our scenes through writing effective subtitles and adjusting scenes. This final piece would become our compelling pitch to the Bose design thinking team.

Final Deliverable

Bose joins the AI device thinking space!

Link to Final Presentation

Reflection

How my design thinking mind has changed

This was my first time working with a big name company in my design career (let alone for a company who’s products I’ve been using since 2016?), and I was thankful for the opportunity to ideate for the future of AI-integrated wearables, also helping me better my design thinking and approaches. Here’s what I learned from this project:

💡Design isn’t always about solving existing problems, its also about changing perspectives. Thought initially users had no interest in our developing product, engagement skyrocketed once we transitioned from working with AI today to presenting our vision of what AI could be.

✏️Using Unconventional Research Techniques. A pivotal moment in our project was our departure from techniques we practiced in class into iterating and using unconventional research techniques, like creating and ideating on storyboards instead of usual user surveys and interviews.

📚Storytelling is Key in Design. My role in editing our film and presenting our pitch reinforced how effective storytelling was in the communication of our ideas by being able to properly resonate with our users.

In summary, this project was an excellent first deep dive into the active design thinking world outside of the comfort of my school. I was excited about this project from beginning to end because this project encouraged me to go beyond solving user pain points, it helped me involve myself with my userbase in order to create experiences that provided new ways of thinking, which heavily stimulated my creative mind. I learned how to bridge the gap between my concepts and the user’s reality in order to create a vision that they didn’t know they wanted, but one I know they’re excited about.

Excited to see our visions come to life in Bose’s future lineup! 💪